Detecting areas affected by the great earthquake in Turkey and Syria using satellite images

In February this year, a devastating earthquake occurred in the border zone of Turkey and Syria. This earthquake caused enormous damage in both countries and according to media reports, more than 50,000 people have died (JP, ENG). Even now (as of March of 2023) the aftershocks continue, and millions of people are evacuated due to collapsed buildings. In the event of such a large-scale disaster, it is important to quickly identify the affected areas, which have destroyed buildings or infrastructure. Earth observation satellites enable us to capture images of large regions, even when local infrastructure does not exist or is no longer usable. Therefore, we investigated whether the affected areas can be detected from high-resolution satellite images using machine learning algorithms.

(日本語版はこちら)

Satellite data

Test data

Maxar Inc., a US-seated space technology company, releases large amounts of open data for areas affected by large disasters, such as earthquakes, flooding, and volcanic eruptions. The images in this case were captured by satellites from the WorldView series, which has currently the world’s highest overall precision, with the following resolutions:

- spatial resolution: 30 centimeter/pixel

- spectral resolution: 8 bands

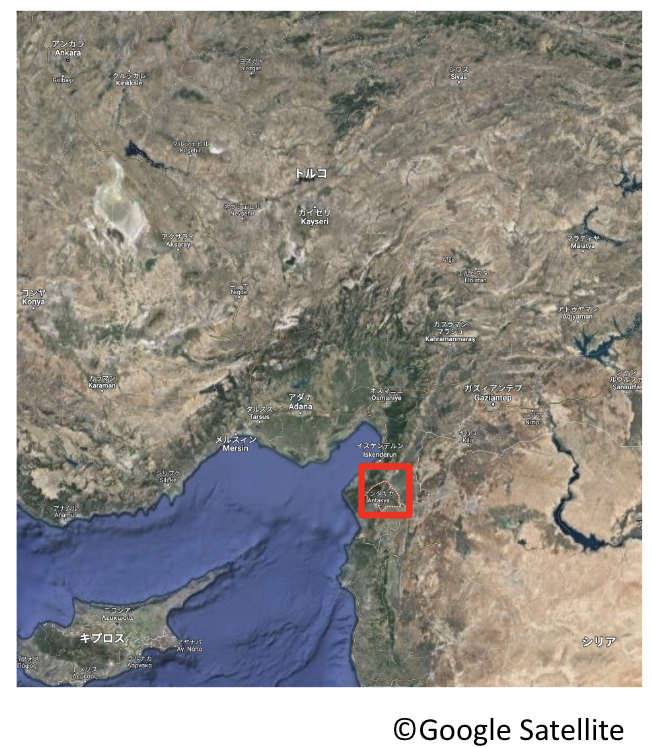

For this analysis, images captured before the earthquake (December 12th, 2022) and after the earthquake (February 8th, 2023) were used to test the accuracy of the algorithm. As a target area, Antakya City in southern Turkey was selected because it was one of the most severely damaged areas.

Learning data

Our model is implemented by harnessing supervised machine learning, which requires labeled data for training. For this, we utilize an open data set called xView. The xView dataset also contains images from the WorldView satellites, the same as the test data but the image conditions are different. The data covers over 1,000 square kilometers of multiple countries around the world, including the United States. Further, the dataset includes 60 classes of labels for man-made objects in the images.

Methodology

Semantic segmentation

Semantic Segmentation is a technique where each pixel in an image is assigned to one fixed class. To grasp the areas affected by the earthquake, damaged buildings are detected because they are a robust indicator of the caused destruction, The above-mentioned training dataset, xView, contains labels for ‘buildings’ and ‘damaged buildings’. These two labels were extracted so that we can apply a 3-class segmentation to the data (‘buildings’, ‘damaged buildings’, ‘other’). However, in the xView data the number of labels for ‘damaged buildings’ is much smaller than the number for ‘buildings’. Therefore, only training data parts that contain both labels were selected and then padded through data augmentation.

Results

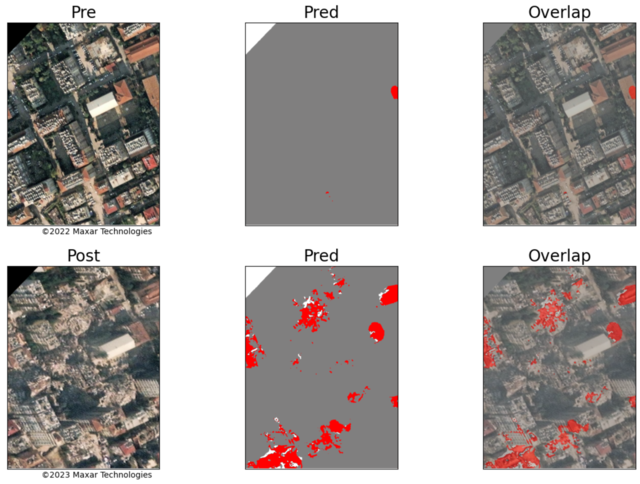

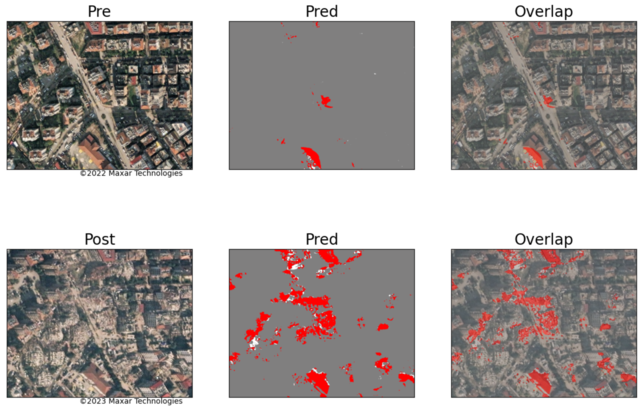

The detection results are shown in the figure below. Here ‘Pre’ are the images taken before the earthquake and ‘Post’ are the images taken after the disaster. ‘Pred’ are the predicted segmentation results (with gray - ‘buildings’; red - ‘damaged buildings’; white - ‘other’). Further ‘Overlap’ has the predicted results overlaid on top of the original images to check the result accuracy.

The results demonstrate that the model is working fairly well, as the ‘Pre’ images are correctly classified with nearly no damaged areas. And the detected ‘damaged buildings’ in the ‘Post’ images can be confirmed by the human eye in the ‘Overlap’ images.

Summary

This time we investigated whether it is possible to detect the affected area in Turkey using satellite images from Maxar. We expected the results to have low accuracy since the image conditions of training and test data were very different, even though the same satellite was used. However, the results showed that many of the damaged buildings could be detected. This indicates that it is possible to detect affected areas with pre-trained models also for newly obtained data. And in general, this study illustrated how useful deep learning and satellite imagery can be for disaster management.